the "Advantageous self-harm"

In game of Dota 2, a self-learning bot made by OpenAI is acting unusually. It is hurting itself to gain an advantage. It is cutting its wrist in order to bait the human player, take advantage of the greed of human nature and abuse the emerged irrationality. The human player takes the bait, the bot turns around and kills the player. The bot gained an advantage by harming itself.

Humans have a blind spot for self-harm. Strategies concerning self-harm are typically written off before even considered. It is in our nature to protect ourselves, protect the brain against negative feelings, protect our body to not get injured. All of our control systems are designed for that. It goes against our nature to pick strategies designed to attack ourselves for a reward that is not immediate or directly observable. AIs are much more open to these kind of ‘dark’ strategies.

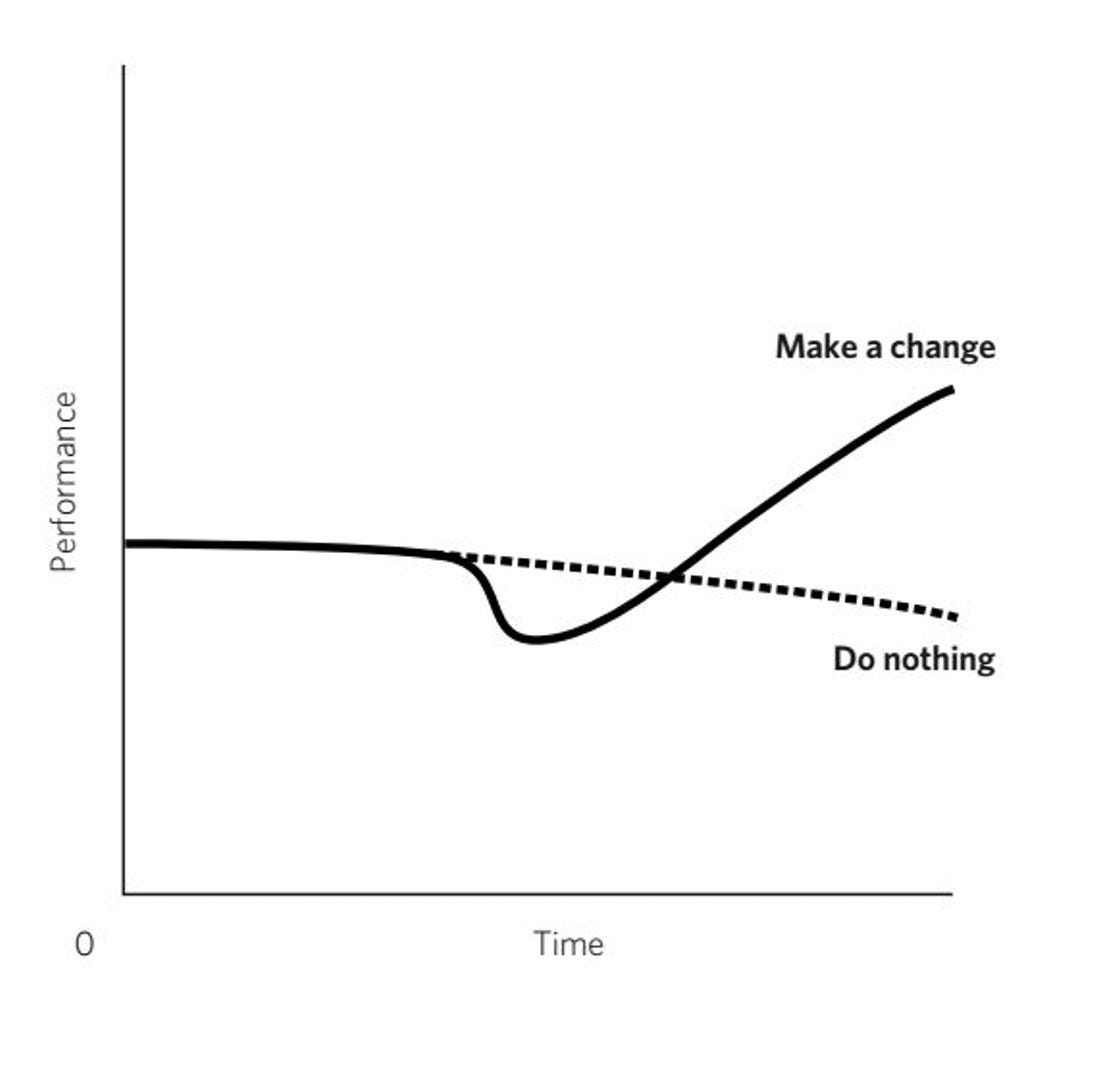

Most people put off pain at all costs. Whenever you have to make a choice which hurts you today, but yields results in the future, it’s always met with resistance. It’s a game of strategic self-harm. AI doesn’t have resistance, no control systems screaming to ‘stop’ or signals ordering to ‘avoid’ the negative task, when it knows the task will yield results.

We are aware, and execute these kind of strategies on a daily basis, but for AIs the repertoire must be so much bigger. They see more, because nothing is off-limits. Only the goal decides the actions, the optimality decides the actions, not the control system that’s avoiding self-harm. I can only imagine the amount of unorthodox strategies this property of AIs unlocks…

The self-destruction

An autoimmune disease is a condition in which your immune system mistakenly attacks your body. The immune system normally guards against foreign invaders, but during an autoimmune disease, the immune system attacks your own cells. Why does this happen? Doctors don’t know exactly what causes the immune-system misfire. There is no consensus nor reliable data on why this phenomenon exists.

I wanted to include autoimmune disease because of the mechanism of self-destruction has parallels to the idea of dark strategies, even if it is probably just an error in the system.

My dog was diagnosed with this disease in her brain and she’s been through a lot. She had surgery on both of her legs and had all kinds of shots and operations. Shortly after these stressful events she got her diagnosis. My first initial thought was: “her body and mind went through so much stress, that it activated a self-destruction mechanism”.

Obviously this is nonsense, but can you imagine? A person is held captive, tortured and the body knows there is no other choice, but to destroy itself inside out, to stop the pain. An ‘exit button’.

Even self-destructive behavior could be part of some strategy, destroying teammates could be part of a strategy but we always meet resistance in these categories and inevitably miss some great unorthodox strategies to achieve a goal.

The unorthodox strategies

Imagine there was an advanced investing bot. You just know it would use immensive amounts of leverage and make very unorthodox moves which definitely would include taking damage upfront for bigger gains later on. It would not shy away from the hurt. The picture is just clear in my head: the bot would go mayhem. People would be scared of it.

Just look at this bit of Dendi playing against OpenAI. He’s terrified! I would be too.

“He’s scary, he’s dominating..”

I know that feeling from my gaming days when I used to play against some known great player for the first time and his movements just would be out of this world. It truly is scary to face something so different.

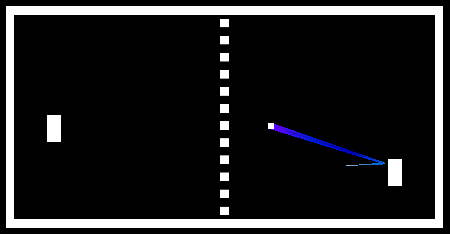

I remember some AI coming up with this strategy in game of pong or something similar where it would just jerk on the bottom corner to beat the game. It would just lurk in one corner, jerk up and down and win. I don’t remember exactly what the game was or what happened but I remember being fascinated that humans never figured this kind of strategy out…

I’m really curious to see in upcoming years, what kind of unorthodox ways AIs come up with on various tasks that we just wouldn’t have thought of.

Moral of the story: There exists some undiscovered unintuitive, against-our-nature strategies that we have a blind spot for. Strategies that we just don’t see because of our nature. Maybe if we pay more attention to strategies through the lense of attacking ourselves, we could discover ways to implicitly gain advantages via that process. Maybe we unlock whole a bunch of unorthodox strategies to execute a task.